Taylor University is updating its artificial intelligence (AI) policy, introducing a new academic AI for faculty and receiving a grant to fund research into the impact of AI.

These changes are being made by the AI Steering Committee, a group of faculty and staff who gather to think about AI, Chris Jones, university vice president and member of the committee, said.

The AI Steering Committee has drafted an AI Governance Policy for Taylor. While not finalized, the draft seeks to clarify principles, expectations and boundaries for future policies and decisions Taylor may make surrounding AI, Jones said.

Jones emphasized the need for Taylor’s AI policy to reflect the university’s mission.

“We are not approaching AI simply as a technology to adopt or avoid,” Jones said. “Instead, we are asking how tools like these intersect with Christian formation, academic integrity, human dignity and the kind of community we are called to cultivate. That framing has guided this work from the start.”

In service of that end, Taylor is seeking a grant from the Lilly Endowment’s Artificial Intelligence in Higher Education Initiative (AIHE).

The AIHE grant is a Lilly Endowment project to help Indiana universities address the rapidly changing AI landscape, according to a Lilly Endowment press release.

“The aim of the initiative is two-fold:” the press release said. “To help Indiana colleges and universities 1) consider more fully the challenges and opportunities that artificial intelligence (AI) presents for their institutions and their students, and 2) develop new or enhance existing strategies and programs to improve their students’ education opportunities and outcomes and their preparation to prosper in the workplace and life in a future that will be increasingly shaped by AI.”

Taylor received a planning grant in December and is using that money to work with Resultant, a consulting company, to apply for a $5 million implementation grant. If Taylor wins the grant, it will be used for deepening Taylor’s engagement and exploration of AI.

Taylor is also making Boodlebox, an academic AI platform, available to faculty as part of a pilot program, Benjamin Hotmire, director of the Bedi Center for Teaching Learning and Excellence (BCTLE), said.

Boodlebox offers multiple AI models, such as Claude 4.5 Opus, Claude 4.5 Sonnet, ChatGPT 5 and Gemini 4.1 to the user. Boodlebox also enables users to create their own custom bot.

A major attraction of Boodlebox is its emphasis on user privacy, Hotmire said. Boodlebox is a data secure platform; it does not train AI models on user data.

Boodlebox allows for collaborative use, where users can give other users access to a conversation or to a folder of conversations. Such a feature may prove useful in the classroom setting, Hotmire said.

Some faculty are already using Boodlebox in their classrooms, giving students full access to the tool, Hotmire said. While he was unable to provide a list of specific classes, Hotmire said they were intentionally spread across different disciplines, like the sciences, social sciences and humanities.

There is a faculty listening session March 23 where faculty who have been using Boodlebox can share their feedback and experience with the tool. However, anecdotal feedback has been positive, despite the fact that it’s been in use for only three weeks, Hotmire said.

“They maybe haven’t had a chance to use it in real depth,” Hotmire said. “But one faculty member emailed me and said, ‘Thank you, this platform is terrific.’ So some initial feedback has been, ‘This is great.’”

Hotmire said Boodlebox is a pilot and is still being evaluated to see whether it meets Taylor’s needs and educational philosophy.

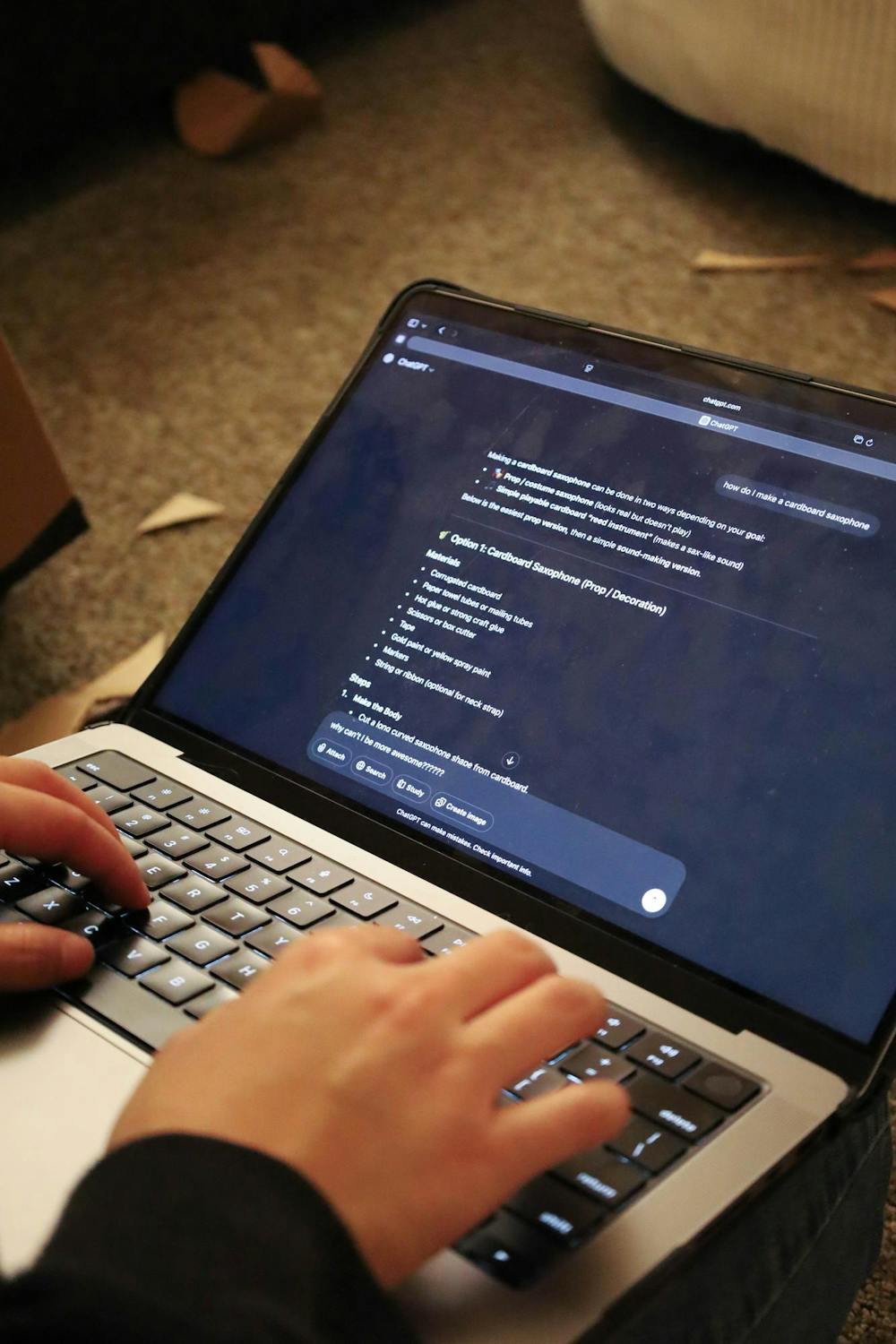

Even as Taylor moves ahead with its AI policy, many faculty are adjusting to its usage in the classroom.

Stefan Brandle, a computer science professor who has been working with AI since the early 90’s, wants students to gain familiarity with AI, and requires its use on some assignments, he said.

“I say, ‘You have to do this with AI and here’s why,’” Brandle said, “‘because I want you to start getting experience with how well it works and what the hiccups are and so on. In particular, if you’re going to look out for job interviews, I want you to be able to look people in the face and say, ‘Oh yeah, I’ve done a number of projects with AI.’’”

While claims that AI can write perfect code by itself are overhyped, a partnership between human programmers and AI is developing, he said. The programmer spends time understanding a problem and coaching AI into writing code that fixes it, then fixing the AI’s code.

Such a partnership is becoming standard in the industry, and undergraduates need to be prepared for such an arrangement, Brandle said.

In the History, Global, and Political Sciences Department, a more cautious attitude prevails, Elizabeth George, a professor of history, said.

“I don’t think anyone’s opposed to the grant, and, like, exploring it more,” George said, “I think there’s more of a hesitation, because unlike in computer science, AI is more of a threat to the basics of what we teach, you know, like how to write a historical argument, how to take historical events and use them to support a thesis. That kind of stuff, it can do just fine.”

While social study education majors might have to use AI in their careers and archives are using non-generative AI to aid research, AI’s frequent hallucinations make it unsuitable for higher-level research, George said.

George has found that the most useful function for AI has been making randomized group lists.

In addition, the amount of dishonest AI use has dramatically increased since ChatGPT came out, George said.

“I was going for a while without any academic dishonesty, and now it’s been every semester several people,” she said. “So that’s frustrating, but it also often makes me feel like we’ve got to find a way to work with this tool. Students are already using it, and there’s only so long that we can punish it.”

The administration is glad a conversation about AI is happening on campus, especially between faculty and students, Hotmire said. Since such AI use will look different in each department and discipline, it’s important that faculty and students think about what a healthy use of AI looks like.

Many students have talked about ethical issues surrounding AI, such as academic integrity, and are seeking a healthy approach to AI, he said.

Conversations around AI are similar to debates around the impact of social media, another highly controversial technology. Hotmire feels the Church largely sat out that debate. The church should not sit this round out, Hotmire said."

“Taylor is not the Church,” he said. “We aren’t a church, but we are a Christian institution, and so we do think it’s good that we’re having these conversations. And because it seems like AI, in whatever form it’s in, is here to stay, we need to think about the most healthy way to engage with AI. And I’m using that phrasing pretty specifically — engage with AI.”